The most significant difference, is that it includes the entire English Wikipedia, a pretty close approximation of the sum of all human knowledge. The search bar and autocomplete are much less obtrusive, and there’s gradients and box shadows, a sure indication of progress :) Description The chrome app version is much more refined, aesthetically and functionally. The app that started a few months ago and took forms as Offline Dictionary.

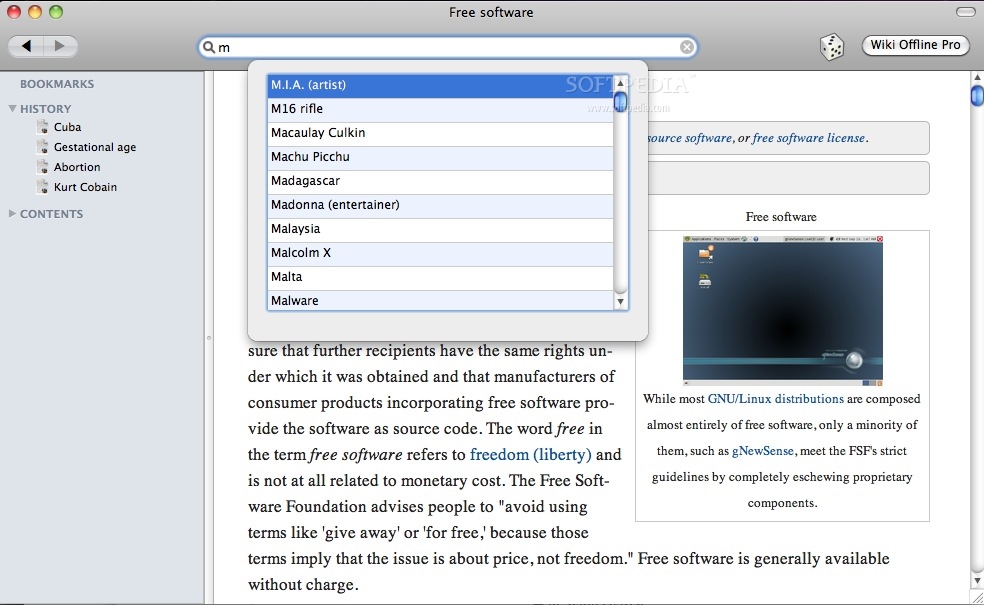

Offline Wiki for Chrome is now on the Web Store. Offline Wiki Chrome Webstore App 23 April 2011 A bitset is used to keep track of which chunks are already downloaded and which need to be downloaded. If it’s not there, it transparently uses an XMLHttpRequest in order to fulfill the request and caches it to disk in the respective persistence mechanism. While online, all storage operations check the virtual file, indexed db, or web sql database. The local file can be built up out of order. It uses an Apple-esque noise texture background.ĭownloads happen in little units called chunks (they’re half a megabyte for the dump file and about four kilobytes for the index). This collapses down to a toolbar header automatically when the screen estate is limited. The interface consists of two regions: the fixed position recessed left panel which holds the page title, a search bar, controls and the page outline. The interface was redesigned with CSS media queries to dynamically transition between different modes in response to different viewing environments. It also now uses WebGL Typed Arrays to further speed things up, such as sending data to and from the WebWorker thread. This makes page loads significantly speedier and allows greater compression ratios, for individual blocks can be made larger ( 256KB instead of 100KB). On the application end, the application has switched from a GWT-compiled LZMA SDK to a speedy, pure javascript decoder. This enables greater compression and less accidentally omitted content. The algorithm which strips extraneous content has been made far more sophisticated than the original series of regular expressions. The advantage is that at 1GB, it’s relatively easy to fit into any system. While ostensibly, the mere top 300k articles is far too narrow to delve deep into the long tail, the breadth of the meager 1/25th of articles consistently surprises me in its depth. Two dumps are distributed at time of writing, the top 1000 articles and the top 300,000 requiring approximately 10MB and 1GB, respectively. First of all, it compresses not the entireity, but rather the most popular subset of the English Wikipedia. The preprocessing toolchain was entirely rewritten for a multitude of reasons. The differences start even before the data gets to the application. Wikipedia never ceases to amaze me and, while I’ve tried in the past to encapsulate part of its sheer awesomeness, this marks a much more significant attempt. While near-ubiquitous connectivity alleviates this to a certain extent, the momentary lapses of networking are incredibly corrosive to an information dependent mentality. There’s just something incredibly alluring about the concept of holding the sum of human knowledge with you at all times. If you want to contact me or read obnoxiously nostalgic autobiographical trivia, check out the about section. This is my little corner of the internet, where I talk about random projects and ideas and stuff. I’m Kevin Kwok, and I’m currently a junior at MIT,Īnd this is my obligatory online presence or whatever. You can store those files anywhere on your local system, you just need to set an environment variable WIKI_DOWNLOADS to the containing folder, before you run the offlineWikiReader.sh script.Hi. wiki-latest-pages-articles-multistream-index.txt (decompressed).One pair of files would need to look like this: dd - for extracting a binary file segmentĭownload a Wikipedia multistream dump + its index file in any number of languages.Make sure you have all required commands installed before running the script: This is a very low level approach, but it definitely enables you to occasionally lookup articles in your own local encyclopedia. Wikipedia offers "byte index" files along every XML dump to make this possible. The key feature is that it operates on the compressed content within seconds (as opposed to uncompressing dozens of gigabytes beforehand). A shell script for searching Wikipedia index files and extracting single page content straight from the related compressed Wikipedia XML dumps.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed